(25th Home) (1963-1986) (1986-1994) (1994-2001) (2001-2005) (2005-2007) (2007-2013)

The Movement Years

With nearly fifteen years under its belt, OSC began experiencing the need to expand, and the facility physically relocated – twice! ACCAD established facilities in Mount Hall and then moved them to 1224 Kinnear. The BALE facility was created. Efforts began in Appalachia and computer clusters were sent to the four corners of the state. And network traffic on the backbone was moved from copper to glass.

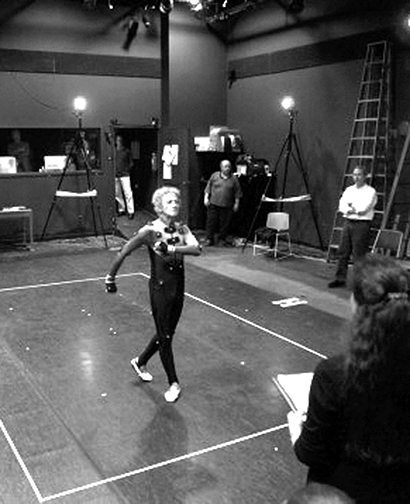

ACCAD Motion Capture Lab

In February 2001, state-of-the-art optical motion capture equipment from Vicon was installed for ACCAD in Mount Hall on Ohio State’s West Campus. Two months later, in April, world-renowned mime artist Marcel Marceau made his third visit to the Ohio State campus, spending a day of his two-week residency performing his piece, “The Eater of Hearts,” and several signature movements in ACCAD’s motion capture lab for permanent archival. ACCAD’s motion capture lab was moved to its current location at 1224 Kinnear in the fall of 2001.

On April 9, 2001, Joel Saltz, Ph.D., accepted a position with the OSU College of Medicine and Public Health and in the Department of Computer and Information Science, with a special appointment as an OSC Senior Fellow.

As a professor and chair of medical informatics at OSU, Saltz would collaborate with researchers throughout the university, including engineering, biological and physical sciences, to develop sophisticated systems to support diverse research pursuits.

The Cluster Ohio Project

Also in April, OSC announced the Cluster Ohio Project, a hardware grant initiative of OSC, the Ohio Board of Regents and the OSC Statewide Users Group to encourage Ohio faculty to build local computing clusters, after the systems’ use at OSC. Several months later, OSC's HPC Division announced that computing systems were awarded to nine Ohio higher education institutions. In addition to the processing units, OSC provided Cluster Ohio Project recipients with onsite maintenance, software, training, and system administration advice.

The cluster grant recipients were:

- Dr. Jacques Amar, University of Toledo

- Dr. Michael Crescimanno, Youngstown State University

- Dr. Charlotte Elster, Ohio University

- Dr. Paul Farrel, Kent State University

- Dr. John Gallagher, Wright State University

- Dr. Kathy Liszka, University of Akron

- Dr. Austin Melton, Kent State University

- Dr. Bin Wang, Wright State University

- Dr. Edward White, Case Western Reserve University

Sun COE-HPCE

Also in April, OSC was selected as a Sun Center of Excellence in High Performance Computing Environments. The Sun COE-HPCE was a collaborative project between OSC, OSU, the University of Cincinnati/Cincinnati Children's Hospital and the University of Akron. The combined investment totaled more than $7 million. As a Sun Center of Excellence, OSC developed and integrated science and business portals, focusing on life science applications. A collaborative testbed infrastructure and distributed storage from Sun was made available through OARnet’s connection to Internet2 to researchers working on a variety of applications. In addition, Sun's visualization technology allowed users to “see” the results of their research.

DoD PETT Contract

Near the end of May, the U.S. Department of Defense awarded a $108 million User Productivity Enhancement and Technology Transfer (PETT) contract to OSC, Mississippi State University and the San Diego Supercomputer Center. OSC competed nationally in conjunction with the other two centers for the contract. This award to support the High Performance Computing Modernization Program was one of the largest in Defense Department history to further academic research and training. The total portion granted to OSC was at least $27 million during a performance period of up to eight years.

Maui Supercomputing Center Contract

Two days later, OSC officials announced that the Center was a partner with the University of Hawaii in being awarded the contract to operate and manage the Maui Supercomputing Center. The contract, which began Oct. 1, had a potential value of $181 million, was awarded through The Air Force Research Laboratory. The University of Hawaii team for the Maui Supercomputing Center consisted of UH, Boeing Rocketdyne Technical Services and Science Applications International Corporation (SAIC), along with the Ohio Supercomputer Center, New Mexico Tech and Textron.

Platform Lab

Later in the summer, Governor Taft's Technology Action Board granted OSC and the Business Technology Center $255,000 through the Ohio Department of Development to create Platform Lab, a leading-edge, shared-software testing and development facility. The goal of Platform Lab was to provide a technology resource that would give Ohio firms a competitive advantage by testing technology solutions affordably. Today, Platform Lab operates as part of TechColumbus and provides technology infrastructure to commercial firms around the world.

Access Appalachia

OSC participated in Access Appalachia in June of 2001, an effort by Ohio to assess the Appalachian region's information infrastructure and help design a blueprint for network advancement. This project provided a technology assessment of the region, analyzed citizen and business usage of the network and developed an infrastructure plan for network advancement in the twenty-nine county area supported by the Appalachian Regional Commission. Access Appalachia provided data about the region's infrastructure, provided a regional approach to boost electronic commerce and laid the groundwork for continued public- and private-sector investent in the region's information infrastructure. Work teams included participants from several organizations, including OSC’s Technology Policy Group, Ohio University, the University of Akron, IT Alliance of Appalachian Ohio, Hocking College and Schottenstein, Zox & Dunn.

Maria Palazzi assumed the directorship of ACCAD, when Carlson became Chair of the OSU Department of Design. Palazzi was an alumna of the Ohio State art programs, earning her master’s degree in Art Education, specializing in Computer Animation, and a bachelor of science in industrial design in Visual Communication Design from OSU. She also was a senior animator and director for Cranston/Csuri Productions from 1983-87.

Itanium Linux Cluster

In August, OSC engineers installed a 146-CPU SGI 750 Itanium Linux Cluster, described by SGI as one of the fastest in the world. The system – with 292GB memory, 428GFLOPS peak performance for double-precision computations and 856GFLOPS peak performance for single-precision computations – followed the Pentium® III Xeon™ cluster that had been operated at OSC for the preceding 18 months. This system was the first Itanium processor-based cluster installed by SGI.

Then, in October, the engineers installed four SunFire 6800 midframe servers, with a total of 72 UltraSPARC III processors.

Dixon Wins TeleCon Award

Later that month at the TeleCon 2001 Conference & Expo, Bob Dixon and Internet2 were presented with the first place award for “Most Innovative Use in Videoconferencing” in the Applications/User category. Dixon won the award for the worldwide Megaconference H.323 Internet videoconferencing events he organizes. Just a few months later, in April, the American Distance Education Consortium (ADEC) gave its Infrastructure Award to Bob Dixon for organizing the Megaconferences.

According to Dixon, “Because of Megaconference, many more people are now aware of Internet videoconferencing and use it regularly. Equipment has been installed in many new locations and in countries, which had none before, fostering new collaborations and friendships.”

Cray Collaboration Agreement

In November, OSC officials signed an agreement to collaborate with Cray on assessing technologies for what is expected to be the world’s most powerful supercomputer product. As part of the 14-month agreement, OSC would help Cray evaluate several I/O node technologies and data archiving tools under consideration for the Cray SV2™ product due out in the second half of 2002. Cray and OSC signed the memorandum of understanding at SC2001 in Denver, Colo.

In January 2002, the Platform Lab hosts a grand opening event at its facility at the Business Technology Center on Kinnear Road. The event signaled that the Lab was open for business, with the first customers already testing software applications. The Lab was available to any organization or business interested in using lab platforms and resources to enhance business productivity. OSC contacts for Platform Lab inquiries included Terry Lewis and Steve Clark.

OSC Systems Move to SOCC

In the Spring of 2002, OSC engineers moved the Center’s supercomputing and storage systems move to a private suite on the fourth floor of the State of Ohio Computer Center (SOCC). The SOCC provided OSC with a secure and reliable facility with custom-based infrastructure, initially on a second floor area belonging to the Department of Administrative Services.

The SOCC was built to centralize state agency data centers in one location, solving the major electrical, mechanical, and security problems common to the operation of all large data centers. The state-of-the-art facility provided maximum security, climate control and fully redundant electrical and mechanical support systems. The facility contained 360,000 square feet of space, including a 225,000 square foot computer room.

“The SOCC facility provides full uninterruptable power service (UPS) for all OSC systems. This will eliminate power outages and help reduce other hardware failures,” stated Al Stutz, OSC associate director. “Inconsistent power creates problems for computers. The SOCC gives us, for the first time, a UPS system robust enough to handle power demands from the Cray systems and clusters.”

The first phase of the move in April proceeded without a hitch, as existing clusters at the SOCC were moved two floors to OSC’s new space. The second phase transferred existing systems from the Kinnear Road Center space leased for more than a decade from OSU to the SOCC. By the middle of May, all OSC public access systems were moved to the SOCC, including the Sun Center of Excellence in High Performance Computing Environments (COE-HPCE) systems, all cluster systems, mass storage systems, the SGI Origin 2000, Cray SV1 and all support systems. The statewide software license servers, WEBCT servers, and OSC web server are all now housed at the SOCC. Teams from Compaq, Cray, Sun, and IBM worked closely with OSC staff to reassemble and test the systems.

In the midst of the move, OSC engineers in March installed a 256-CPU AMD Linux Cluster. The 32-bit Parallel Processing (MPP) system featured one gigabyte of distributed memory, 256 1.4 & 1.53 gigahertz AMD Athlon processors and a Myrinet and Fast Ethernet interconnect.

OARnet Leadership, Services Changes

The start-up fiber-optics company from which OARnet had considered buying “dark fiber” decides not to go into business. Doug Gale starts assessing existing dark-fiber lines, but resigns from OARnet before taking action. Al Stutz is appointed OARnet director and establishes several statewide committees to advise on various aspects of a new dark-fiber network. A design consisting mainly of several large loops emanating from Columbus is adopted to give redundancy in case of line breaks.

Stutz discontinued some OARnet peripheral services, such as web page services, that were not covering costs, while suggesting alternate service providers to the customers. He consolidated the OARnet and OSC business offices and moved all of the OARnet offices to available space at 1224 Kinnear Rd.

BALE Completion

In July, OSC officials put the finishing touches on a $1.5 million, 7,040-square-foot expansion called the Blueprint for Advanced Learning Environment, or BALE. OSC deployed more than 50 NVIDIA Quadro™4 900 XGL workstation graphics boards to power the BALE Cluster for volume rendering of graphics applications.

BALE provided an environment for testing and validating the effectiveness of new tools, technologies and systems in a workplace setting, including a theater, conference space and the OSC Interface Lab. BALE Theater featured about 40 computer workstations powered by a visualization cluster. When workstations were not being used in the Theatre, the cluster was used for parallel computation and large scientific data visualization.

BALE Conference Room supported standard and advanced audiovisual presentations. Several options for advanced visualizations were available, including support from the Interface Lab, InterPlay Lab and Access Grid. The BALE facility also supported staff digital production needs with a 30-sq.-ft. sound-insulated booth for audio recording.

In October, OSC engineers installed the 300-CPU HP Workstation Itanium 2 Linux zx6000 Cluster. OSC selected HP’s computing cluster for its blend of high performance, flexibility and low cost. The HP cluster used Myricom's Myrinet high-speed interconnect and ran the Red Hat Linux Advanced Workstation, a 64-bit Linux operating system.

Cluster Ohio Project Phase Two

In November, the Center announced the second phase of the Cluster Ohio project. In March, they began distributing Itanium components to five recipients:

- Dr. Jianping Zhu, University of Akron

- Dr. Stephen Wright, Miami University

- Dr. C. K. Shum, The Ohio State University

- Dr. Nikolaos Bourbakis, Wright State University

- Dr. David Robertson, Otterbein College

Throughout 2002, OARnet, ITEC-Ohio and the OSU Office of the CIO were developing the Transportable Satellite Internet System, a satellite trailer used for distance learning and special events in rural areas and at conferences where reliable terrestrial Internet connectivity was unavailable. The partner organization, led by Bob Dixon, had received a $65,000 grant from ADEC to build and operate the system. In January 2003, Microsoft’s Bill Gates nominated the TSIS project for the ComputerWorld Honors Program.

OSC Networking Operations

In June 2003, a new OSC advisory council is formed to provide direct input into OSC and OSC Networking operations. The new advisory group consisted of state intra-university council (IUC) Chief Information Officers (CIO). The OSC Information and Research Officers Advisory Council will actively participate in long-range strategic planning, give technology leadership and management advice, and have the authority to establish subcommittees for specific tasks.

On June 30, the OSC Governing Board appoints Dr. Stanley C. Ahalt, OSU professor of Electrical Engineering, as OSC’s Executive Director, as Pitzer returns to his faculty position in chemistry. For several years, Ahalt had worked as academic lead in Signal and Image Processing (SIP) in the Department of Defense (DoD) High Performance Computing Modernization Program’s Programming Environment and Training (HPCMP PET) initiative. He possessed an active research program that had brought in more than $4 million through his collaborations with the Army Research Laboratory (ARL), Wright Patterson Air Force Base, and the Defense Advanced Research Projects Agency (DARPA), in addition to the National Science Foundation, National Institutes of Health, and industrial partnerships.

“We are very fortunate to have in Ohio one of the best people in the world to lead OSC,” said Dr. Joe Steger, Governing Board Chair and University of Cincinnati President. “After an international search, it became evident that Dr. Ahalt, an outstanding scholar, researcher and leader, is the right match for OSC.”

In July, the Ohio SchoolNet Commission and the Ohio Department of Education joined an OSC Networking initiative to build the nation’s most advanced statewide fiber optic service for education and research. The network would later be named the Third Frontier Network. In the fall, OARnet would pay $4.6 million for 20-year Indefeasible Rights of Use (IRUs) for an initial backbone dark-fiber package from Spectrum Networks that leases a fiber package from:

- American Electric Power

- Williams Communications (Wiltel)

- American Fiber Systems (AFS)

Transportable Satellite Internet System

Also during the summer of 2003, the Transportable Satellite Internet System (TSIS) was used to send live educational broadcasts to schools across the country from the re-enactment of the Lewis and Clark Expedition from St Louis to the Pacific Ocean, organized by Bob Dixon. A year later, in June 2004, Dixon would receive the “Infrastructure Development Award” from ADEC for his work on the Transportable Satellite Internet System.

SGI Altix 3700

In September, OSC engineers installed a SGI Altix 3700 system to replace its SGI Origin 2000 system and to augment its HP Itanium 2 Cluster. The Altix was a non-uniform memory access system with 32 Itanium processors and 64 gigabytes of memory. The Altix featured Itanium 2 processors and runs the Linux operating system. OSC's HP Cluster also included Itanium 2 processors and runs Linux. The cluster and Altix were distinguished by the way memory is accessed and its availability to any or all processors.

Cluster Ohio Phase Three

In October, OSC announced the third round of the Center’s Cluster Ohio project, providing researchers around the state with five AMD Athlon clusters, each with 24 compute nodes, one login node and one storage node. The clusters were awarded in February 2004 to:

- Dr. Jacques Amar, Dr. Jon Eric Bjorkman, and Dr. Constantine E. Theodosiou, University of Toledo

- Dr. Scott Hooper, Ohio University

- Dr. Christopher Kochanek, Dr. David H Weinberg, Dr. Anil K Pradhan and Dr. Richard W Pogge, The Ohio State University

- Dr. Walter R. Lambrecht, Dr. John Ruhl, Dr. Rolfe G. Petschek, Dr. Philip L. Taylor, Dr. Lawrence Krauss, Dr. Robert Brown and Dr. Glenn Starkman, Case Western Reserve University

- Dr. Wolfgang Windl, Dr. Ju Li, and Dr. Yunzhi Wang, The Ohio State University

Springfield Supercomputing Center

In December, OSC received $6 million from the U.S. Department of Energy to establish a supercomputing center in Springfield, Ohio. OSC’s Kevin Wohlever is named director of Springfield Operations. OSC’s plans for Springfield’s PrimeOhio Corporate Park included establishing a satellite operation that would focus on data management and mining, as well as remote data mirroring across high performance networks. An important secondary mission of the center was to support multi-agency cooperation on critical areas of scientific computing. Congressman David Hobson (R-Springfield) worked with the Turner Foundation, a nonprofit economic development organization that supports Clark County, to start the Springfield Research Park. After the DoE funds ran out, OSC-Springfield closed its doors in 2007.

Pentium 4 Linux Cluster

Also that month, OSC engineers installed a 512-CPU Pentium 4 Linux Cluster. Replacing the AMD Athlon cluster, the P4 doubled the existing system’s power with a sizable increase in speed. With a theoretical peak of 2,457 gigaflops, the P4 cluster contained 256 dual-processor Pentium IV Xeon systems with four gigabytes of memory per node and 20 terabytes of aggregate disk space. It was connected via a gigabit Ethernet and used Voltair InfiniBand 4x HCA, and a Voltair ISR 9600 InfiniBand switch router for high-speed interconnect.

Parallel Virtual File System

In 2003, several partner organizations released the Parallel Virtual File System (PVFS), an open-source parallel file system that distributes file data across multiple servers and provides for concurrent access by multiple tasks of a parallel application. PVFS was jointly developed between OSC, The Parallel Architecture Research Laboratory at Clemson University and the Mathematics and Computer Science Division at Argonne National Laboratory. The National Aeronautics and Space Administration (NASA), the Department of Energy, the National Science Foundation and other government and private agencies funded the PVFS development project, beginning in 1993. In 1999, a new version of PVFS was proposed, and OSC’s Pete Wyckoff helped complete the project.

Third Frontier Network Initiative

In January, the Federal FY 2004 Consolidated Appropriations Act directed $5.1 million to BOR to fund the development of the Third Frontier Network initiative, helping to make Ohio the world’s leader in using state-of-the-art computer networking to improve education, research and medical care. The bill provided $3.4 million in funding through the Department of Health and Human Services (HHS), as well as $1.7 million from the U.S. Department of Education. The HHS funding, among other things, funded connections to the state’s academic medical centers with Ohio’s children’s hospitals and select community hospitals. The connection enhanced the ability of physicians to communicate over high-quality television and Internet connections, improved access to specialists in underserved areas and enabled collaborative educational and research efforts. The Education funding, as one of its core activities, created a science education network.

IBM Mass Storage System

Also in January, OSC engineers installed the IBM Mass Storage System. IBM would supply a virtualized and autonomic storage infrastructure to power OSC’s research applications, increasing OSC’s data storage capacity five-fold over its previous system and resulting in over 600 terabytes – or six trillion bytes – of physical storage capacity. OSC had already begun phasing in IBM TotalStorage FAStT storage servers, TotalStorage SAN File System and TotalStorage SAN Volume Controller.

In February, OSC and three state medical centers received $350,000 for pediatric cancer research as part of the federal FY2004 Omnibus Appropriations bill. The grant was used to apply new techniques developed at the National Cancer Institute's Advanced Biomedical Computing Center (NCI-ABCC) to the study of children's diseases. Research results would accelerate the insight and understanding of cancer, leading to improved diagnostics, treatments and even new prevention options. The University of Cincinnati Children’s Hospital and Medical Center, the Medical College of Ohio in Toledo and The Ohio State University Medical Center participated in the one-year pilot project coordinated by OSC.

Later in the month, OSC announced an upgrade to its SV-1ex vector supercomputer that increased its processing capacity by 33 percent. With the addition of eight new processors, peak performance was then 64 Gigaflops, delivering the computing power of roughly 64 billion calculators all yielding an answer each and every second. Originally purchased in 1999, the SV1ex upgrade significantly increases the performance capacity of a system that had proven to be a workhorse for the research community.

The OSC-Springfield offices would officially open in April 2004. Over the next several months, OSC engineers would install the 16-MSP Cray X1 system, the Cray XD1 system and the 33-node Apple Xserve G5 Cluster at Springfield office. A 1-Gbit/s Ethernet WAN service linked the cluster to OSC’s remote-site hosts in Columbus. The G5 Cluster featured one front-end node configured with four gigabytes of RAM, two 2.0 gigahertz PowerPC G5 processors, 2-Gigabit Fibre Channel interfaces, approximately 750 gigabytes of local disk and about 12 terabytes of Fibre Channel attached storage.

Grant Brings Connectivity to Southeast Ohio

Near the end of August 2004, OARnet, ITEC-Ohio and OSU engineers, led by Bob Dixon, installed a satellite dish, LAN and WAN antennae that provided Internet connectivity to New Straitsville, Ohio. Later, they also installed similar systems in four additional small towns in southeast Ohio.

The Internet connectivity provided educational services to adult learners and job-training opportunities to low-income residents that helps improve their quality of life, increase their standard of living, and provide a valuable resource to police, fire, libraries, and other community services. The projects were funded by a grant from the Governor’s Office of Appalachia.

In October, OSC engineers installed three SGI Altix 350s and upgraded the Itanium2 Linux Cluster to 520 CPUs. The Altix 350s featured 16-processors each for SMP and large-memory applications configured. They included 32GB of memory, 161.4 Gigahertz Intel Itanium2 processors, 4 Gigabit Ethernet interfaces, 2-Gigabit FibreChannel interfaces, and approximately 250 GB of temporary disk.

Blue Collar Computing

In November at SC2004 in Pittsburgh, Stan Ahalt delivered the keynote speech, “Toward a Blue Collar Computing Economy.” As more than 1,000 international audience members listened, Ahalt explained that HPC has reached a critical juncture as economic forces continue to shape its market segment. Ahalt argued that a fundamental shift in the HPC market – the addition of Blue Collar Computing – needs to take place to revitalize its leadership in computational science, engineering and product design. Several barriers in industry must be removed for industry to effectively use HPC. These barriers include an adequately trained workforce, limited exposure to HPC solutions, and a focus in the supercomputing community on “Grand Challenge” problems instead of commercial problems.

Third Frontier Network Launch

On the last day of November, federal and state officials, industry leaders and university researchers gathered at The Ohio State University and several participating universities across the state to launch the Third Frontier Network, the most advanced statewide, fiber-optic network for education, research and economic development.

“When other states work to develop a statewide network to help share information and create jobs, Ohio will light the way,” Governor Bob Taft said. “The TFN will transform teaching and learning and will accelerate the commercialization of new knowledge throughout Ohio.”

Albert A. Frink Jr., assistant secretary for manufacturing and services at the U.S. Department of Commerce delivered featured remarks and assisted Gov. Bob Taft in officially “lighting the network.” As the assistant secretary, Frink advocated, coordinated and implemented policies designed to help U.S. manufacturers compete globally.

The new network connected Ohio’s universities and colleges with each other, their business partners, Ohio’s federal labs, hospitals and K-12 schools as well as Internet2, a national high-performance backbone network for advanced networking application development. Almost all of Ohio's colleges and universities were using the TFN fiber-optic backbone, with 11 higher education institutions having direct access via last-mile connections. Additional campuses, their industry research partners, Ohio’s federal facilities and primary and secondary schools would start connecting during the summer of 2005 as finances and logistics permited.

“Today, we are embarking on a new era of communication and collaboration,” said Rod Chu, chancellor of the Ohio Board of Regents. “Over the Third Frontier Network, we will pool Ohio’s best minds and share its best resources with the aim of reclaiming the state’s heritage of leading the nation in innovation and production.”

The Ohio Board of Regents had committed $19 million for the construction of the TFN and supported its ongoing operation through $3 million in annual funding for the networking arm of OSC. TFN’s backbone would operate at the highest capacity (OC-48) of any statewide education and research network in the country. Officials planned to create a research link, starting in 2005, that would quadruple the research bandwidth (OC-192) to participating scientific and research communities.

The technical highlight of “lighting” the network was a statewide, online medical consultation on a virtual model of the mouse genome to seek clues to the cause of human genetic birth defects. The consultation involved researchers at: the Wright State University School of Medicine, the Medical College of Ohio, the Cleveland Clinic connecting from Cleveland State University; the Northeastern Ohio Universities College of Medicine connecting from the University of Akron; the Genome Research Institute at the University of Cincinnati; and the Ohio University College of Osteopathic Medicine.

To set the stage for the videoconference demonstration, Dr. David Millhorn of the University of Cincinnati’s Genome Research Institute explained the vital importance of this field of study and the impact of new broadband technologies on that research. Using a beta (pre-release) version of high-quality, videoconference software and hardware, Millhorn spoke to audiences in Columbus and around the state at 60-frames-per-second, twice the speed of the current videoconference rate of 30-frames-per-second.

And, on Dec. 21, 2004, the Platform Lab received more than $1.1 million from a Wright Project grant to expand its commercial capabilities across the TFN. With the Wright Project grant, the lab created four branch location access points along the statewide fiber-optic network. Platform Lab would develop a wide area fiber channel test infrastructure and be available to commercial clients and academic institutions for developing and validating high bandwidth intensive distributed applications.

(25th Home) (1963-1986) (1986-1994) (1994-2001) (2001-2005) (2005-2007) (2007-2013)

(May 15)

(May 15)